|

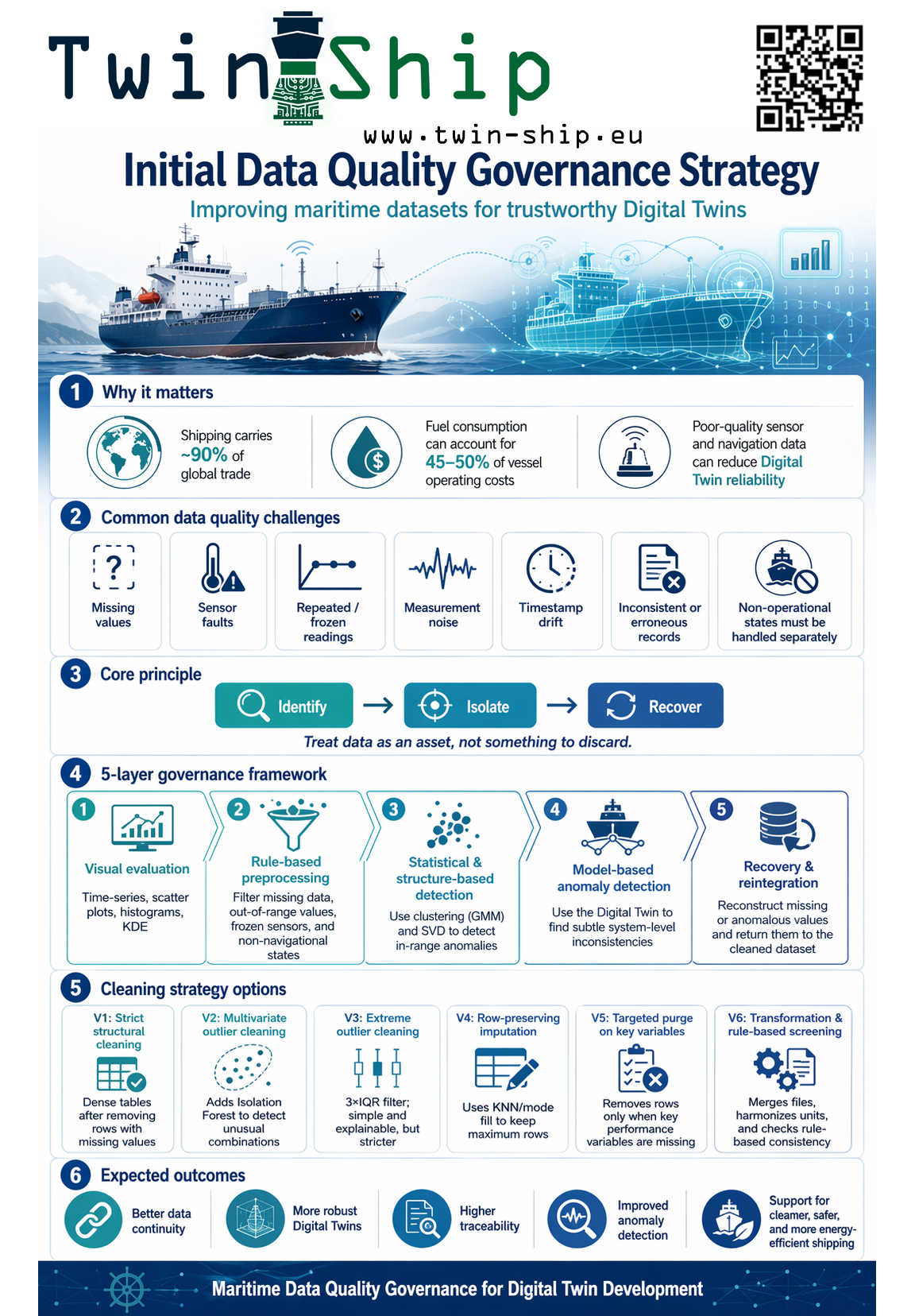

🚢 Data quality is the foundation of trustworthy maritime digital twins.

As shipping becomes increasingly digitalized, vessels generate large volumes of operational and navigation data that can support condition monitoring, fuel efficiency improvement, route optimization, and digital twin development. But this data is often affected by missing values, sensor faults, repeated readings, measurement noise, and inconsistent records.

In our latest deliverable, the Initial Data Quality Governance Strategy (DQGS), we present a structured approach for improving the quality of maritime datasets before and during digital twin development. The deliverable can be downloaded from: the Data Quality Governance Strategy (DQGS).

The DQGS is built around a layered framework that combines:

✅ Visual data evaluation

✅ Rule-based preprocessing using domain knowledge

✅ Metadata-driven quality checks

✅ Statistical and structure-based anomaly detection

✅ Digital-twin-supported anomaly detection and data recovery

A key principle of the framework is that data should be treated as an asset, not simply discarded. Instead of removing all anomalous or incomplete data, the approach focuses on identification, isolation, and recovery, helping preserve valuable operational information while improving model reliability.

The report also compares several preprocessing strategies, from strict structural cleaning and multivariate outlier filtering to row-preserving imputation and targeted cleaning of key performance variables. This provides flexibility when selecting the most suitable dataset for future modelling and analysis.

By integrating data governance directly into the digital twin workflow, the framework supports more reliable, traceable, and continuously improving maritime data-driven systems.

This work contributes to the development of trustworthy digital twins for cleaner, safer, and more energy-efficient shipping. 🌊⚓

|

|